At the start of 2026, I sat down and wrote out our AI development methodology. Not a loose set of habits, but an actual document - the rules, the workflow, the things we'd learned the hard way about what works and what doesn't when you use AI to build production software every day.

I'm sharing it because every "how to use AI for development" article I've read has been written by someone who either works at an AI company or used AI for a weekend project. We've been using it in production, every day, for months. We've made every mistake you can make. And we've landed on a methodology that actually works.

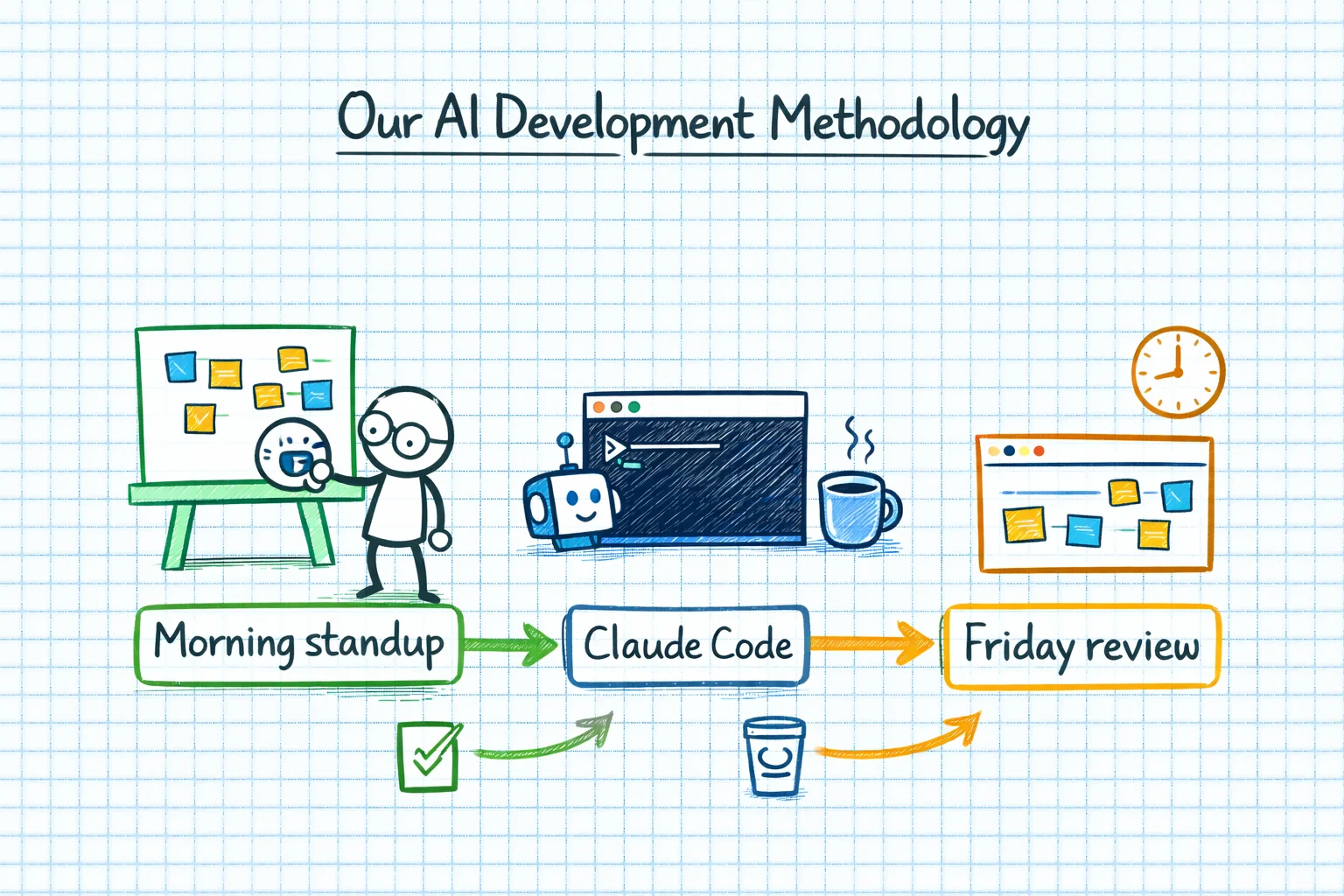

The Daily Workflow

Here's what a typical development day looks like in our team:

1. Pick up a task. We use Task Board for project management. The developer grabs the next priority ticket.

2. Let Claude read the ticket. Via our MCP integration, Claude reads the task description, acceptance criteria, and any linked context directly from Task Board. No copy-pasting. No "let me describe the problem". The AI reads the source of truth.

3. Plan before you build. This is non-negotiable. Before writing any code, Claude proposes an approach. Which files need changing? What's the data flow? Are there edge cases? The developer reviews the plan, pushes back where needed, and agrees the approach before a single line of code is written.

4. Implement with the AI. Claude Code writes the code. The developer reviews, tests, and iterates. This isn't "accept everything the AI produces". It's a conversation - the developer directing the architecture while the AI handles the implementation detail.

5. Test. Obvious, but worth stating: AI-generated code gets the same testing rigour as human-written code. More, actually, because confident wrongness is a real pattern and automated tests catch things that look-right-but-aren't.

6. Commit with context. Commit messages explain what was done and why. If the AI helped, the commit should still be comprehensible to someone who doesn't know AI was involved.

The Anti-Vibe-Coding Rules

"Vibe coding" is when you let the AI generate code, glance at it, think "that looks about right", and move on. It's the development equivalent of signing a contract without reading it. It feels efficient. It's actually reckless.

We have explicit rules against it:

You must understand WHY the code works, not just accept that it works. If Claude generates a solution and you can't explain the approach to a colleague, you haven't finished the task. You've delegated your understanding, and that's a debt that compounds with interest.

If you can't explain what changed, you can't commit it. Every commit should be something the developer can walk through line by line. Not because we're being pedantic, but because when something breaks at 2am, "the AI wrote it and I don't really understand it" is not a debugging strategy.

No blind copy-paste from AI output. Read it. Understand it. Modify it if needed. Then integrate it. The AI is a starting point, not a finished product.

These rules sound obvious. They are not. The temptation to vibe-code is strong, especially when you're under pressure and the AI's output looks clean. Resist it.

The Context Document

One of the best things we implemented early on was the context document - a living markdown file that captures what the AI knows about our project. We call it CLAUDE_CONTEXT.md and it lives in the root of each repository.

It includes: the project architecture, key design decisions, naming conventions, common patterns, known issues, and anything else that would help the AI (or a new developer) understand the codebase. We update it regularly.

This solves a real problem. AI models don't have persistent memory across sessions. Every new conversation starts from zero. Without a context document, you spend the first ten minutes of every session re-explaining your project structure. With one, the AI reads it and starts at the right level of understanding.

The Friday Review

This is the part that separates our approach from "just use Copilot and ship faster". Every Friday, developers review the week's AI-assisted work with the team. The format is simple:

Explain back what was done. Not what the AI did. What you did, using the AI as a tool. If you can't explain the architectural decisions, the trade-offs, and the reasoning, that's a red flag. It means the AI was driving and you were a passenger.

Review the context document. Has it been updated? Does it still accurately represent the project? Are there new patterns or decisions that should be captured?

Share what didn't work. This is crucial. The AI gets things wrong. Approaches that looked promising fail. Sharing these saves the rest of the team from hitting the same walls.

The Friday review serves two purposes. First, it's quality control - it catches vibe-coding before it becomes technical debt. Second, it's a learning mechanism - the team collectively gets better at working with AI by sharing what works and what doesn't.

When NOT to Use AI

This is the section I'm most proud of, because it's the one you'll never see in a blog post written by someone selling AI tools.

Don't use AI when you're learning a new concept. If you've never used SignalR before, don't ask Claude to write your SignalR implementation. You'll end up with working code that you don't understand, which means you can't debug it, extend it, or explain it. Learn the concept first - read the docs, build a toy example, understand the mental model. Then use AI to accelerate your implementation.

Don't use AI when you've failed three times. If you've gone back and forth with Claude three times and the problem isn't solved, stop. The AI is going in circles. Step back, think about the problem from first principles, and either solve it yourself or bring in a human colleague. The fourth AI attempt will not magically succeed when the first three failed.

Don't use AI for novel debugging. When a bug is genuinely weird - when the symptoms don't match any known pattern, when the error messages are misleading, when the root cause is a subtle interaction between systems - AI struggles. It pattern-matches against known problems, and by definition, novel bugs don't match known patterns. These are the moments when human intuition and deep system knowledge earn their keep.

Don't use AI as a crutch. If you find yourself unable to write basic code without AI assistance, that's not productivity. That's dependency. Maintain your ability to code independently. Use AI to go faster, not to go at all.

The Setup

For anyone who wants to adopt a similar workflow, here's our practical setup:

MCP servers: Task Board (project management), MongoDB (database access), Chrome (browser interaction). Each gives Claude direct access to a system we use daily.

Custom commands: We've built slash commands for common workflows - understanding a codebase section, scaffolding new components, running code reviews. These encode our patterns so the AI follows our conventions, not generic best practices.

Context files: CLAUDE_CONTEXT.md in every repo. Updated weekly. Treated as seriously as any other documentation.

Claude Code as the primary interface. Not the chat window. Claude Code, in the terminal, connected to the codebase. The chat interface is for strategic thinking and planning. Claude Code is for building.

One Year In

We've been developing this methodology for about six months now. It's not perfect. We still have days where someone vibe-codes something and it causes a problem. We still have weeks where the context document gets neglected. We still occasionally use AI when we should be learning the fundamentals.

But the trajectory is clear. We're shipping faster, with fewer bugs, with better documentation, and with a team that's growing more capable - not more dependent. The methodology is the reason.

AI without methodology is just autocomplete with delusions of grandeur. AI with a thoughtful, honest, battle-tested methodology is a genuine multiplier.

Here's to the AI dev era. We're figuring it out as we go, and we're not pretending otherwise.